Method of Vision-Task-Friendly Underwater Image Enhancement

-

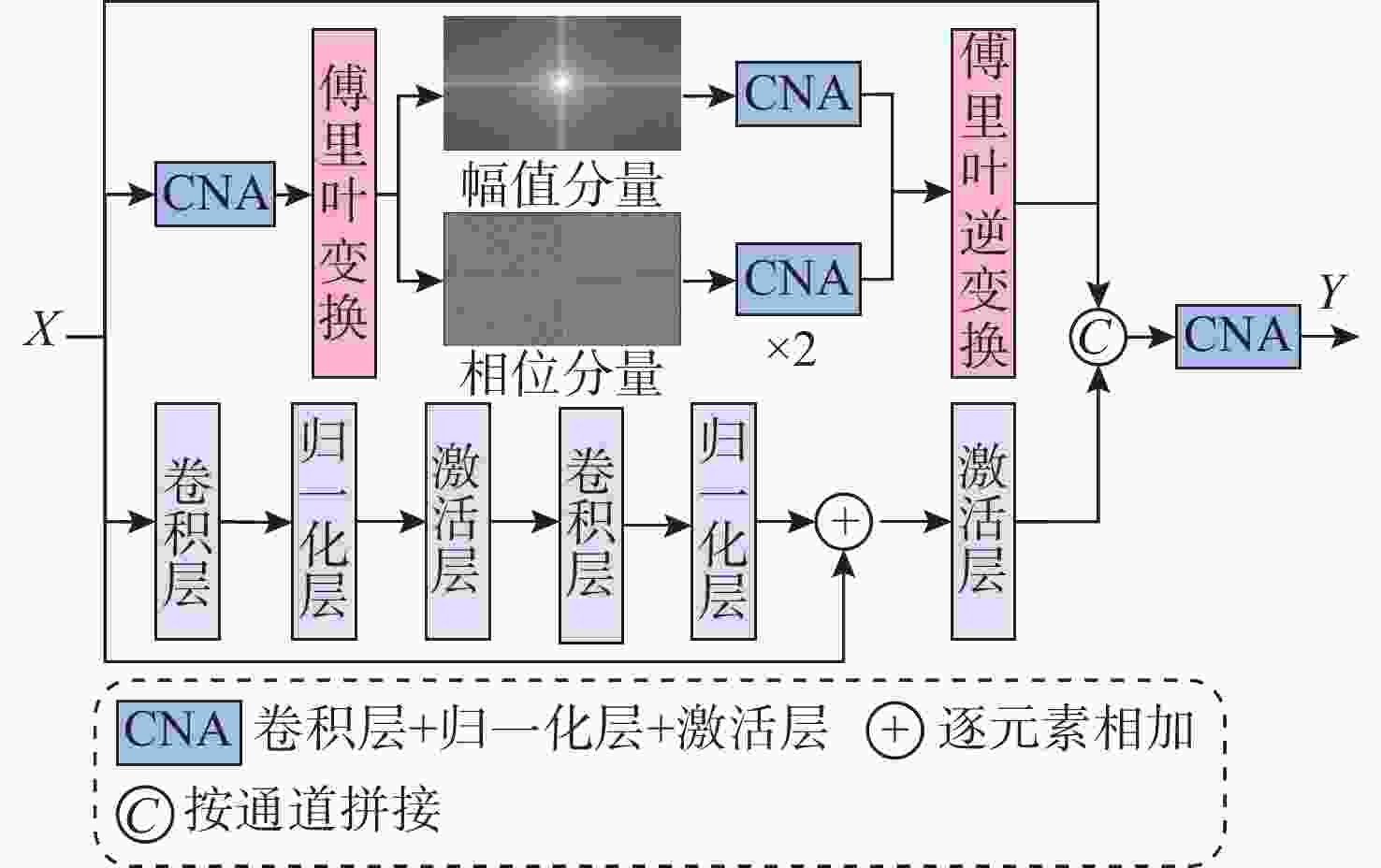

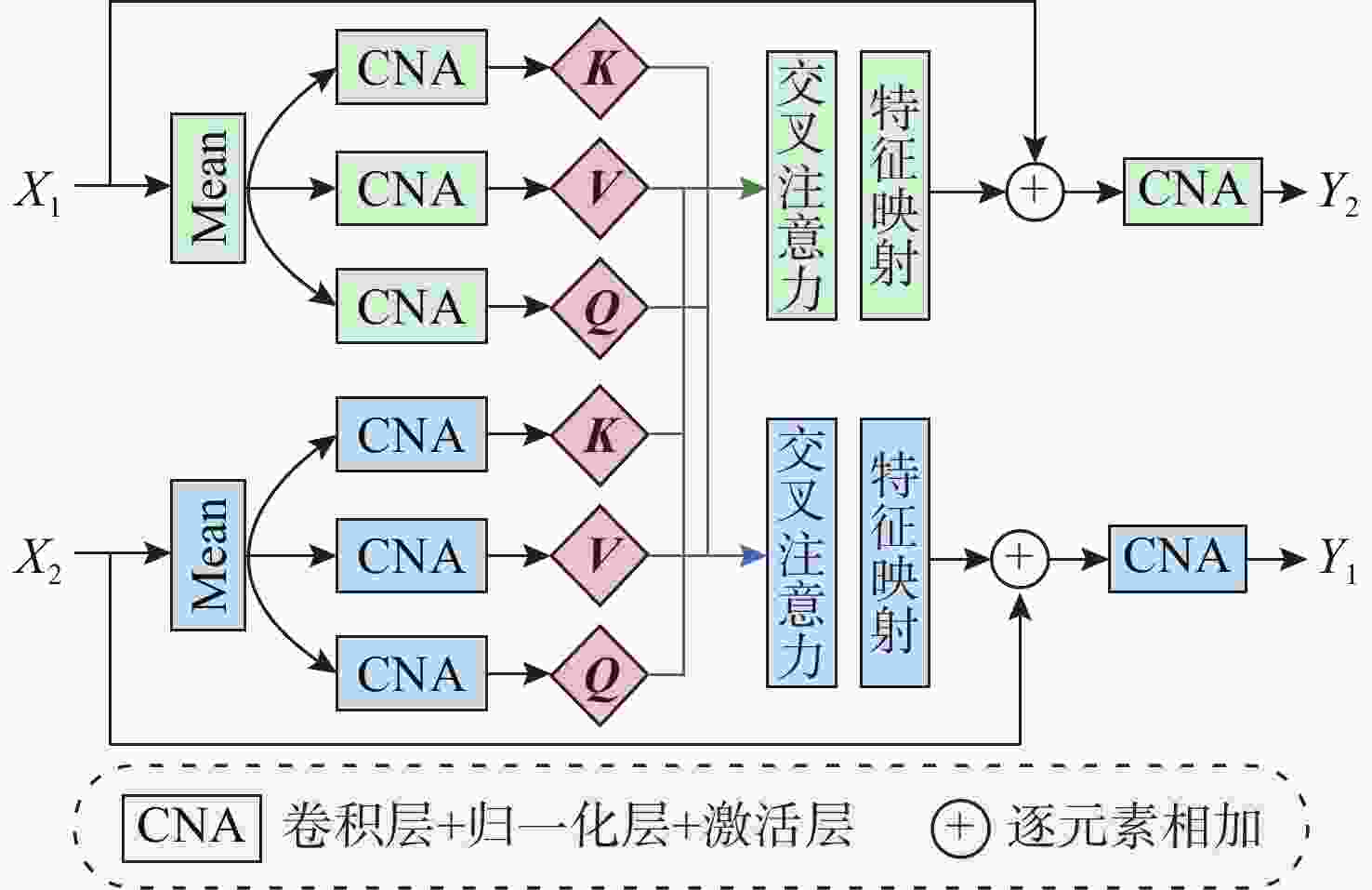

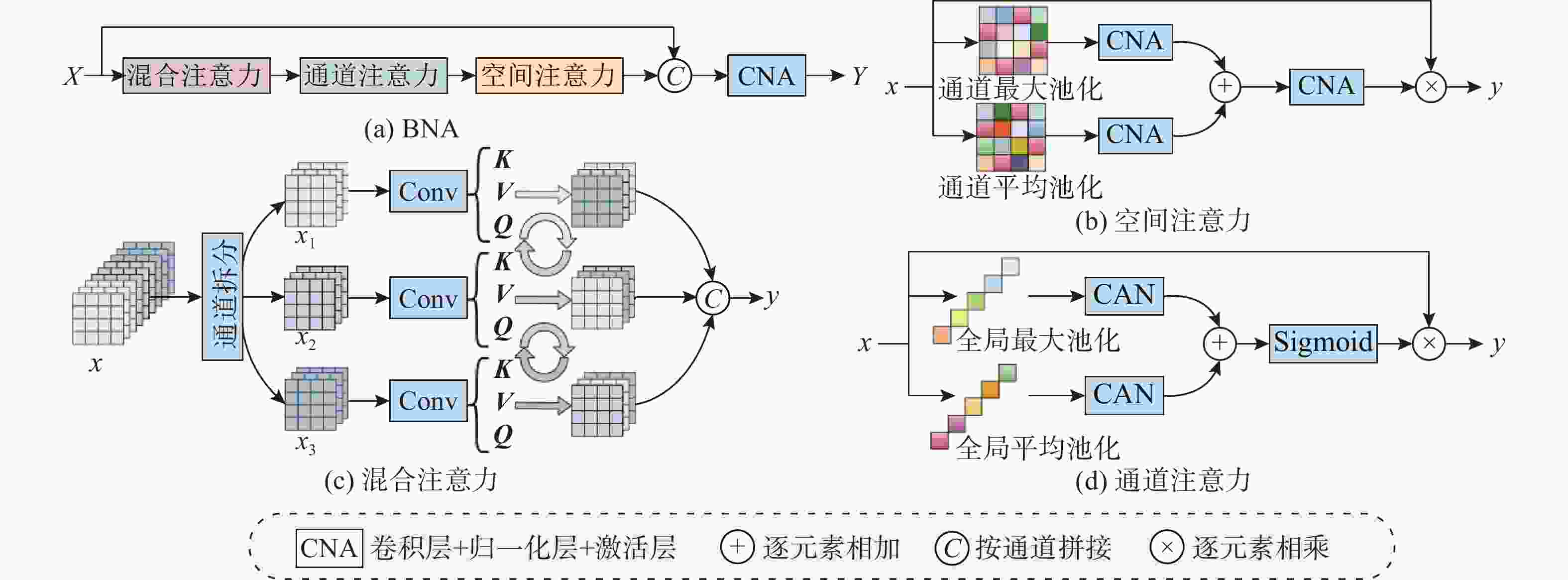

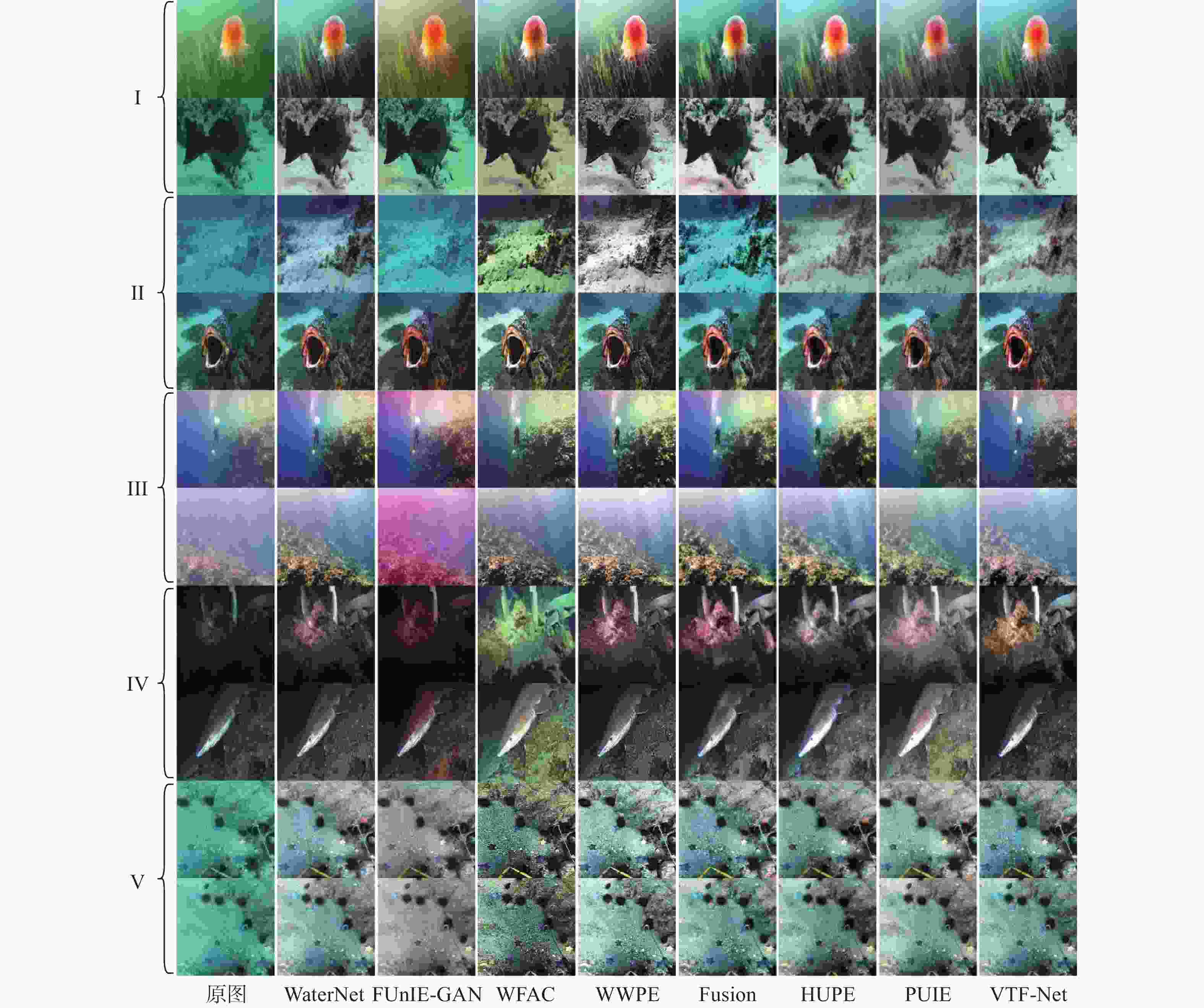

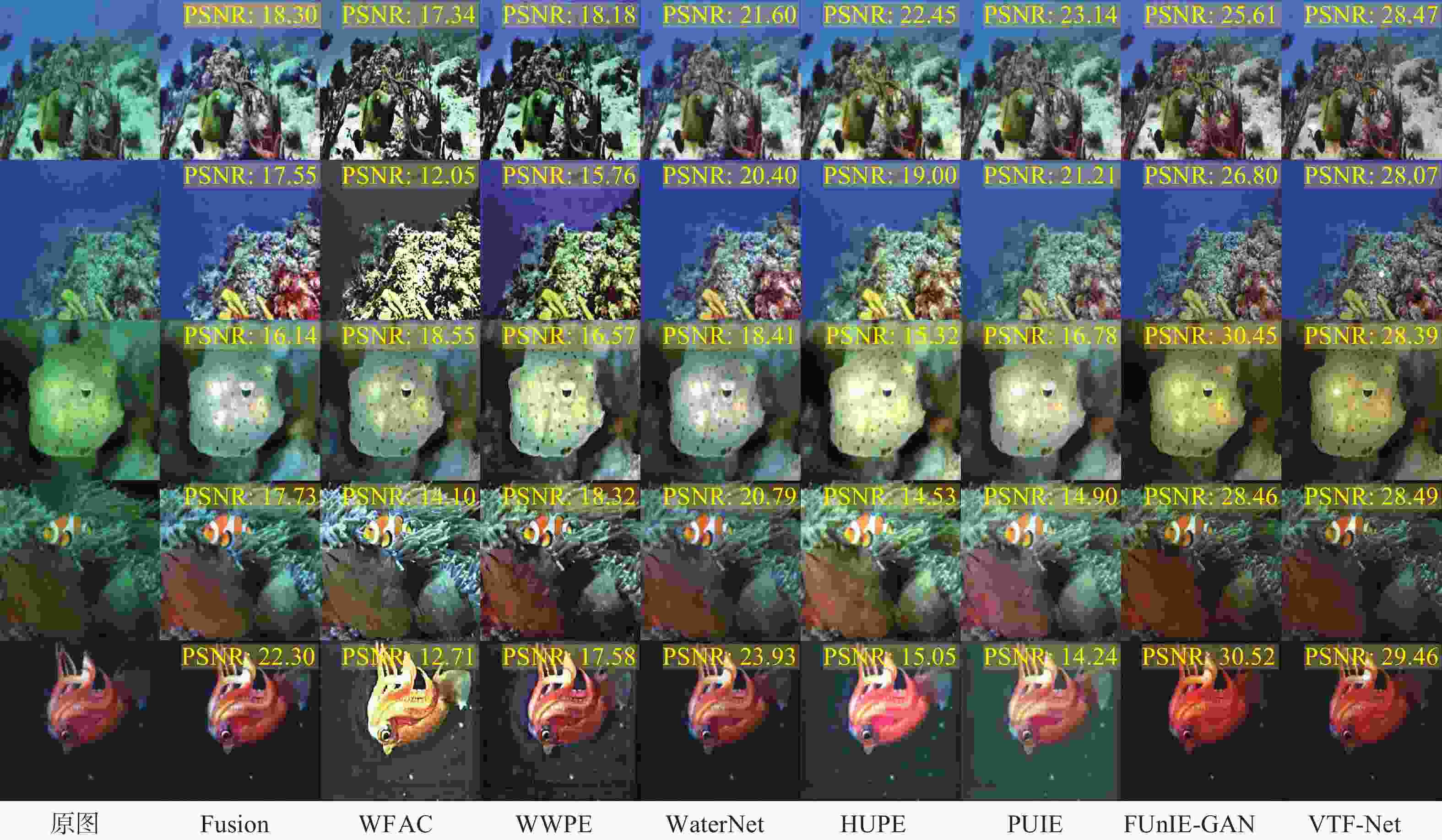

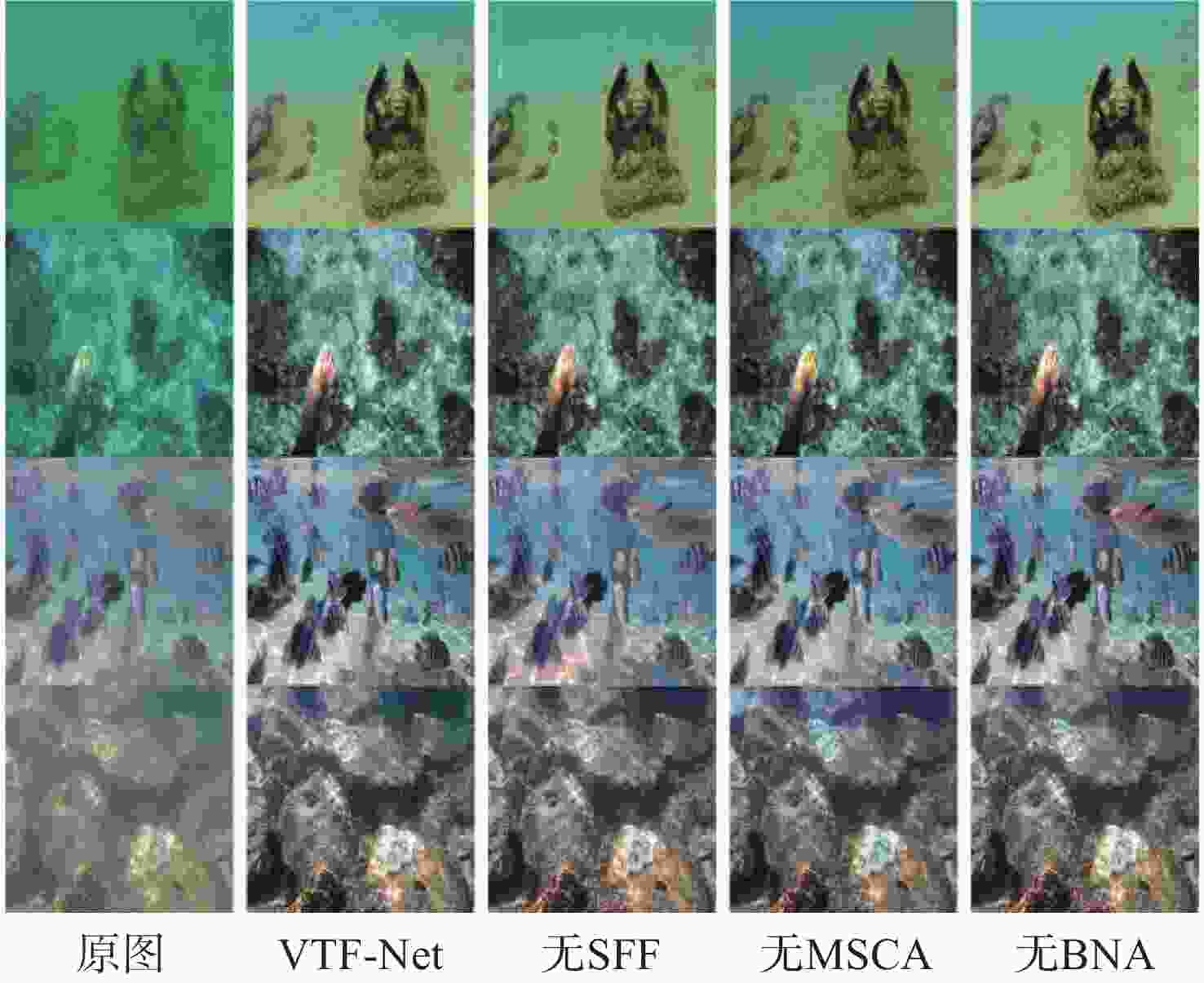

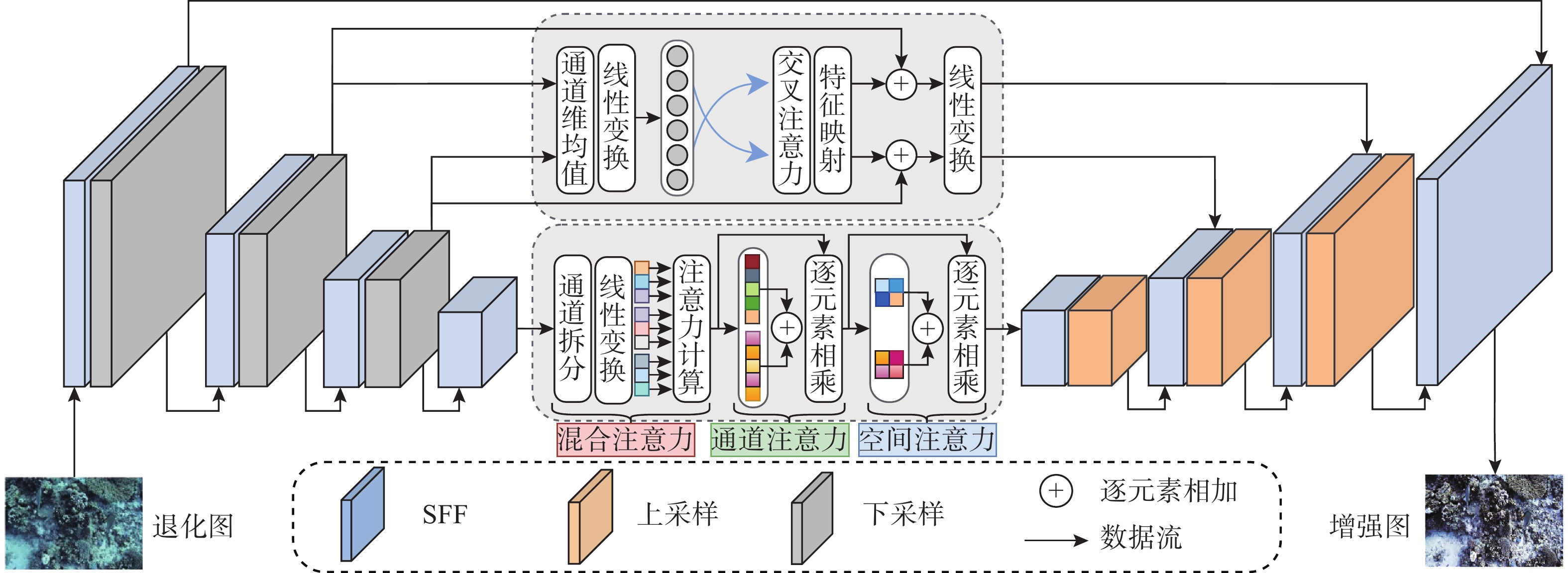

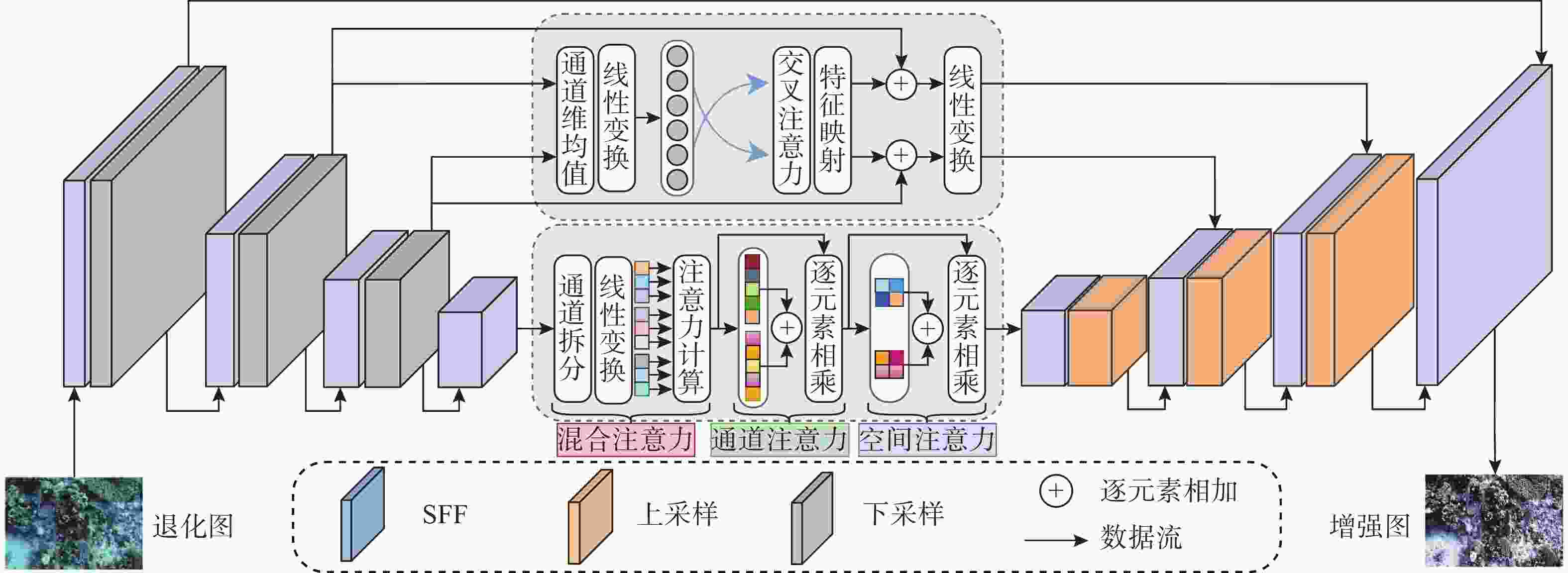

摘要: 水下图像因遭受严重的色彩和结构失真, 影响各种水下视觉任务的表现。现有的水下图像增强方法侧重于改善视觉外观, 忽略优化下游视觉任务的必要性。为此, 文中提出了一种视觉任务友好的水下图像增强方法(VTF-Net)。首先设计了全新的空域频域融合增强模块(SFF), 该模块能大幅提高模型对纹理细节的感知度和图像的保真度; 其次为了实现编码器和解码器之间信息的高效传递, 文中引入多尺度交叉注意力模块(MSCA)和瓶颈注意力模块(BNA), 在保证高效特征提取的基础上增加对全局梯度的感知, 有效改善图像的色偏和模糊问题。最后针对视觉任务友好的理念, 提出一种检测损失函数, 通过引入水下目标检测结果引导模型优化方向。实验结果表明: 文中所提方法在定性和定量实验中均取得了更好的结果, 同时在水下目标检测应用实验中取得了最优的结果。Abstract: Underwater images suffer from severe color and structural distortions, which degrade the performance of various underwater vision tasks. Existing underwater image enhancement methods focus on improving visual appearance while ignoring the necessity of optimizing the downstream vision tasks. To address this issue, this paper proposes a Visual Task-Friendly underwater image enhancement Network(VTF-Net). Specifically, we first design a novel Spatial-Frequency Fusion enhancement module(SFF), which can significantly improve the model’s perception of texture details and image fidelity. Second, to achieve efficient information transmission between the encoder and decoder, we introduce a Multi-Scale Cross-Attention module(MSCA) and a Bottleneck Attention module(BNA), which enhance the perception of global gradients while ensuring efficient feature extraction, thereby effectively alleviating color cast and blurring. Finally, in line with the concept of visual task-friendliness, we propose a detection loss function that guides the optimization direction of the model by incorporating underwater object detection results. Experimental results demonstrate that the proposed method achieves superior performance in both qualitative and quantitative evaluations, and obtains the best result in the application experiment of underwater object detection.

-

表 1 不同方法在UFO120和EUVP515测试集上的定量对比分析

Table 1. Quantitative comparative analysis of different methods on the UFO120 and EUVP515 test sets

UFO120 EUVP515 参数量×106 单帧耗时/s PSNR↑ SSIM↑ UIQM↑ UCIQE↑ PSNR↑ SSIM↑ UIQM↑ UCIQE↑ Fusion 17.58 0.81 5.10 0.45 17.63 0.81 4.32 0.43 — 0.240 WFAC 15.37 0.68 4.01 0.43 15.65 0.69 3.93 0.43 — 0.359 WWPE 15.64 0.69 4.37 0.45 15.84 0.69 4.15 0.45 — 0.388 WaterNet 19.70 0.86 4.57 0.43 19.68 0.86 4.38 0.44 1.09 0.034 HUPE 18.22 0.83 4.70 0.44 18.07 0.82 4.38 0.44 2.05 1.360 PUIE 19.00 0.84 4.28 0.41 19.10 0.84 4.01 0.40 1.01 0.545 FUnIE-GAN 24.83 0.85 4.64 0.43 23.52 0.85 4.32 0.43 7.02 0.094 VTF-Net 25.32 0.87 4.77 0.45 24.77 0.86 4.33 0.43 6.50 0.164 表 2 各个模块的消融实验结果

Table 2. The ablation experiment results of each modules

PSNR↑ SSIM↑ UIQM↑ UCIQE↑ VTF-Net 22.819 0.834 4.204 0.396 无SFF 21.540 0.821 4.117 0.367 无MSCA 21.847 0.829 4.177 0.392 无BNA 22.631 0.832 4.089 0.394 表 3 不同算法的检测对比分析

Table 3. Comparison and analysis of detection results using different algorithms

Fusion WFAC WWPE WaterNet HUPE PUIE FUnIE-

GANVTF-

Net目标

数量57 67 51 57 42 59 47 83 mAP 0.898 0.890 0.891 0.878 0.897 0.898 0.884 0.901 -

[1] Zhuang P, Li C, Wu J. Bayesian retinex underwater image enhancement[J]. Engineering Applications of Artificial Intelligence, 2021, 101: 104171. doi: 10.1016/j.engappai.2021.104171 [2] Yu Q, Hou G, Zhang W, et al. Contour and texture preservation underwater image restoration via low-rank regularizations[J]. Expert Systems with Applications, 2025, 262: 125549. doi: 10.1016/j.eswa.2024.125549 [3] 岳成海, 徐会希, 吕凤天, 等. 基于光照补偿与金字塔融合的水下图像增强方法[J]. 水下无人系统学报, 2025, 33(1): 46-55. doi: 10.11993/j.issn.2096-3920.2024-0082Yue C H, Xu H X, Lu F T, et al. Underwater image enhancement method based on illumination compensation and pyramid-based blending[J]. Journal of Unmanned Undersea Systems, 2025, 33(1): 46-55. doi: 10.11993/j.issn.2096-3920.2024-0082 [4] 宁泽萌, 林森, 李兴然. 散射光补偿结合色彩保持与对比度均衡的水下图像增强[J]. 水下无人系统学报, 2024, 32(5): 823-832. doi: 10.11993/j.issn.2096-3920.2023-0131Ning Z M, Lin S, Li X R. Scattered light compensation combined with color preservation and contrast balance for underwater image enhancement[J]. Journal of Unmanned Undersea Systems, 2024, 32(5): 823-832. doi: 10.11993/j.issn.2096-3920.2023-0131 [5] Chiang J Y, Chen Y C. Underwater image enhancement by wavelength compensation and dehazing[J]. IEEE Transactions on Image Processing, 2012, 21(4): 1756-1769. doi: 10.1109/TIP.2011.2179666 [6] Drews Jr P, do Nascimento E, Moraes F, et al. Transmission estimation in underwater single images[C]//2013 IEEE International Conference on Computer Vision Workshops, 2013: 825-830. [7] Carlevaris-Bianco N, Mohan A, Eustice R M. Initial results in underwater single image dehazing[C]//Oceans 2010 MTS/IEEE Seattle, 2010: 1-8. [8] Li J, Skinner K A, Eustice R M, et al. WaterGAN: Unsupervised generative network to enable real-time color correction of monocular underwater images[J]. IEEE Robotics and Automation Letters, 2017: 1-1. [9] 张俊, 罗凡, 袁政. 基于轻量化多尺度CNN的水下图像增强算法及边缘端部署[J]. 水下无人系统学报, 2025, 33(6): 1065-1073. doi: 10.11993/j.issn.2096-3920.2025-0094Zhang J, Luo F, Yuan Z. Lightweight multi-scale CNN-based underwater image enhancement algorithm and edge deployment[J]. Journal of Unmanned Undersea Systems, 2025, 33(6): 1065-1073. doi: 10.11993/j.issn.2096-3920.2025-0094 [10] 周世健, 朱鹏莅, 刘厶源, 等. 基于多域属性表征解耦的水下图像无监督可控增强[J]. 水下无人系统学报, 2024, 32(5): 808-817. doi: 10.11993/j.issn.2096-3920.2023-0165Zhou S J, Zhu P L, Liu S Y, et al. Unsupervised controllable enhancement of underwater images based on multi-domain attribute representation disentanglement[J]. Journal of Unmanned Undersea Systems, 2024, 32(5): 808-817. doi: 10.11993/j.issn.2096-3920.2023-0165 [11] Yang M, Hu K, Du Y , et al. Underwater image enhancement based on conditional generative adversarial network[J]. Signal Processing: Image Communication, 2020, 81: 115723. [12] Peng L, Zhu C, Bian L. U-shape transformer for underwater image enhancement[J]. IEEE Transactions on Image Processing, 2023, 32: 3066-3079. doi: 10.1109/TIP.2023.3276332 [13] Jiang Q, Kang Y, Wang Z, et al. Perception-driven deep underwater image enhancement without paired supervision[J]. IEEE Transactions on Multimedia, 2024, 26: 4884-4897. doi: 10.1109/TMM.2023.3327613 [14] Li W, Wu X, Fan S, et al. INGC-GAN: an implicit neural-guided cycle generative approach for perceptual-friendly underwater image enhancement[J]. IEEE Transactions on Neural Networks And Learning Systems, 2025, 36(6): 10084-10098. doi: 10.1109/TNNLS.2025.3539841 [15] Liu R, Jiang Z, Yang S, et al. Twin adversarial contrastive learning for underwater image enhancement and beyond[J]. IEEE Transactions on Image Processing, 2022, 31: 4922-4936. doi: 10.1109/TIP.2022.3190209 [16] Cheng Z, Fan G, Zhou J, et al. FDCE-net: Underwater image enhancement with embedding frequency and dual color encoder[J]. IEEE Transactions on Circuits and Systems for Video Technology, 2025, 35(2): 1728-1744. doi: 10.1109/TCSVT.2024.3482548 [17] Zhu Z, Li X, Ma Q, et al. FDNet: Fourier transform guided dual-channel underwater image enhancement diffusion network[J]. Science China-Technological Sciences, 2025, 68(1): 1-17. doi: 10.1007/s11431-024-2824-x [18] Zhou J, Zhou R, He Z, et al. Hierarchical wavelet decomposition network for water-related optical image enhancement[J]. IEEE Journal of Oceanic Engineering, 2025, 50(2): 776-794. doi: 10.1109/JOE.2024.3458349 [19] Ronneberger O, Fischer P, Brox T. U-Net: Convolutional networks for biomedical image segmentation[C]//Navab N, Hornegger J, Wells W M, et al. Medical Image Computing and Computer-Assisted Intervention-MICCAI 2015. Cham: Springer International Publishing, 2015: 234-241. [20] Law H, Deng J. CornerNet: detecting objects as paired keypoints[J]. International Journal of Computer Vision, 2020, 128(3): 642-656. doi: 10.1007/s11263-019-01204-1 [21] Yang X, Tian Y. Robust door detection in unfamiliar environments by combining edge and corner features[C]//2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition-Workshops, 2010: 57-64. [22] Liu W, Anguelov D, Erhan D, et al. SSD: Single shot MultiBox detector[C]//Computer Vision-ECCV 2016. Cham: Springer International Publishing, 2016: 21-37. [23] Yan X, Liu X, Qu R, et al. A multi-scale feature extraction and attention aggregation network for underwater image enhancement[J]. Expert Systems with Applications, 2026, 297: 129226. doi: 10.1016/j.eswa.2025.129226 [24] Hu J, Shen L, Sun G. Squeeze-and-excitation networks[C]//2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2018: 7132-7141. [25] Zhu X, Cheng D, Zhang Z, et al. An empirical study of spatial attention mechanisms in deep networks[J]. 2019 IEEE/CVF International Conference on Computer Vision (ICCV), 2019-10: 6687-6696. [26] Zhao H, Gallo O, Frosio I, et al. Loss functions for image restoration with neural networks[J]. IEEE Transactions on Computational Imaging, 2017, 3(1): 47-57. doi: 10.1109/TCI.2016.2644865 [27] Li C, Guo C, Ren W, et al. An Underwater Image Enhancement Benchmark Dataset and Beyond[J]. IEEE Transactions on Image Processing, 2020, 29: 4376-4389. doi: 10.1109/TIP.2019.2955241 [28] \Jahidul Islam M, Luo P, Sattar J. Simultaneous enhancement and super-resolution of underwater imagery for improved visual perception[C]//Robotics: Science and Systems XVI. Robotics: Science and Systems Foundation, 2020. [29] Islam M J, Xia Y, Sattar J. Fast Underwater Image Enhancement for Improved Visual Perception[J]. IEEE Robotics and Automation Letters, 2020, 5(2): 3227-3234. doi: 10.1109/LRA.2020.2974710 [30] Zhang W, Liu Q, Lu H, et al. Underwater image enhancement via wavelet decomposition fusion of advantage contrast[J]. IEEE Transactions on Circuits and Systems for Video Technology, 2025, 35(8): 7807-7820. doi: 10.1109/TCSVT.2025.3545595 [31] Zhang W, Zhou L, Zhuang P, et al. Underwater image enhancement via weighted wavelet visual perception fusion[J]. IEEE Transactions on Circuits and Systems for Video Technology, 2024, 34(4): 2469-2483. doi: 10.1109/TCSVT.2023.3299314 [32] Ancuti C, Ancuti C O, Haber T, et al. Enhancing underwater images and videos by fusion[C]//2012 IEEE Conference on Computer Vision and Pattern Recognition. Providence, RI: IEEE, 2012: 81-88. [33] Zhang Z, Jiang Z, Ma L, et al. HUPE: Heuristic underwater perceptual enhancement with semantic collaborative learning[J]. International Journal of Computer Vision, 2025, 133(6): 3259-3277. doi: 10.1007/s11263-024-02318-x [34] Fu Z, Wang W, Huang Y, et al. Uncertainty inspired underwater image enhancement[C]//Avidan S, Brostow G, Cissé M, et al. Computer vision-ECCV. Cham: Springer Nature Switzerland, 2022, 13678: 465-482. [35] Korhonen J, You J. Peak signal-to-noise ratio revisited: Is simple beautiful?[C]//2012 Fourth International Workshop on Quality of Multimedia Experience, 2012: 37-38. [36] Panetta K, Gao C, Agaian S. Human-visual-system-inspired underwater image quality measures[J]. IEEE Journal of Oceanic Engineering, 2016, 41(3): 541-551. doi: 10.1109/JOE.2015.2469915 [37] Wang Z, Bovik A C, Sheikh H R, et al. Image quality assessment: from error visibility to structural similarity[J]. IEEE Transactions on Image Processing, 2004, 13(4): 600-612. doi: 10.1109/TIP.2003.819861 [38] Yang M, Sowmya A. An underwater color image quality evaluation metric[J]. IEEE Transactions on Image Processing, 2015, 24(12): 6062-6071. doi: 10.1109/TIP.2015.2491020 -

下载:

下载: