Domain-Adaptive Underwater Target Detection Method Based on GPA + CBAM

-

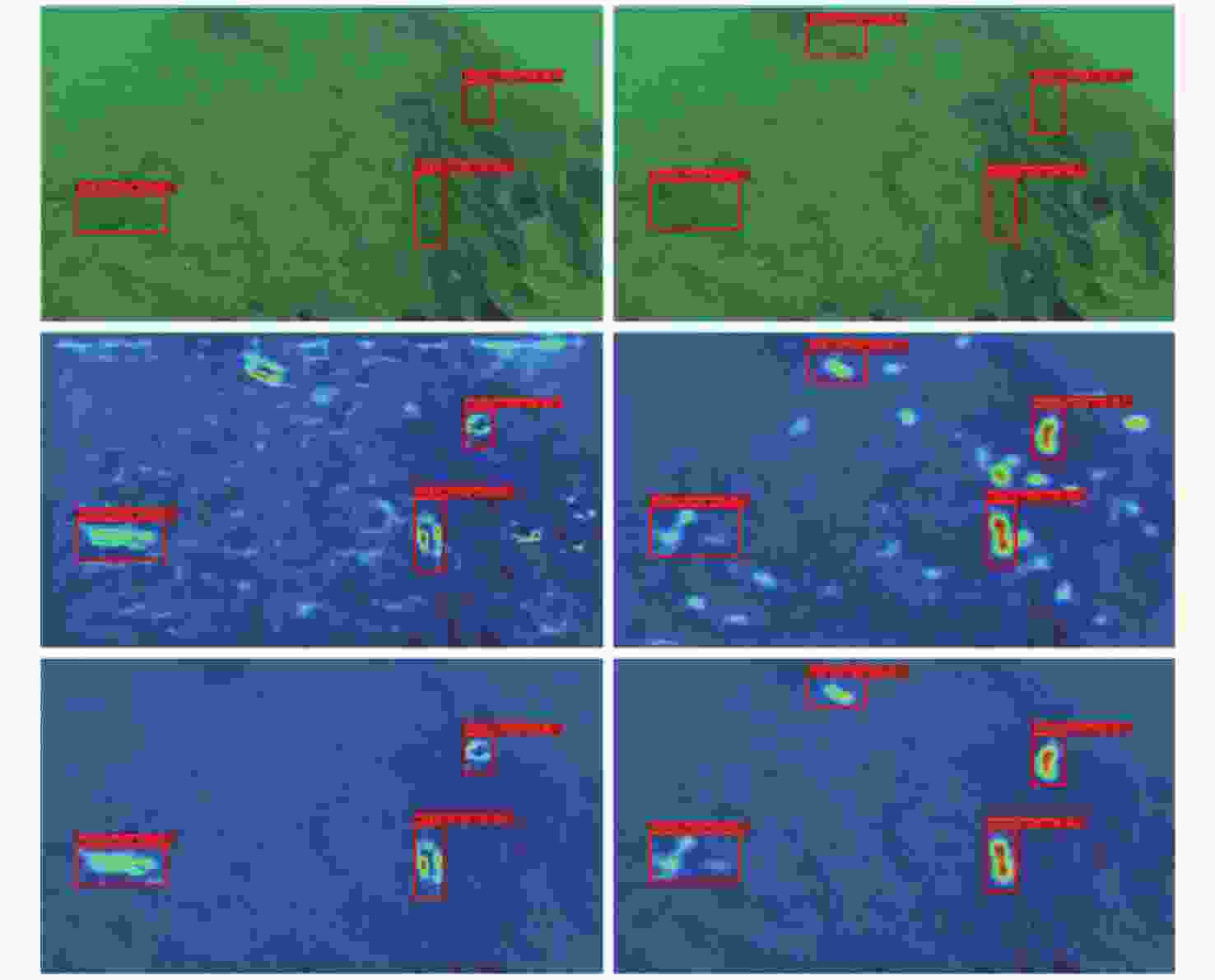

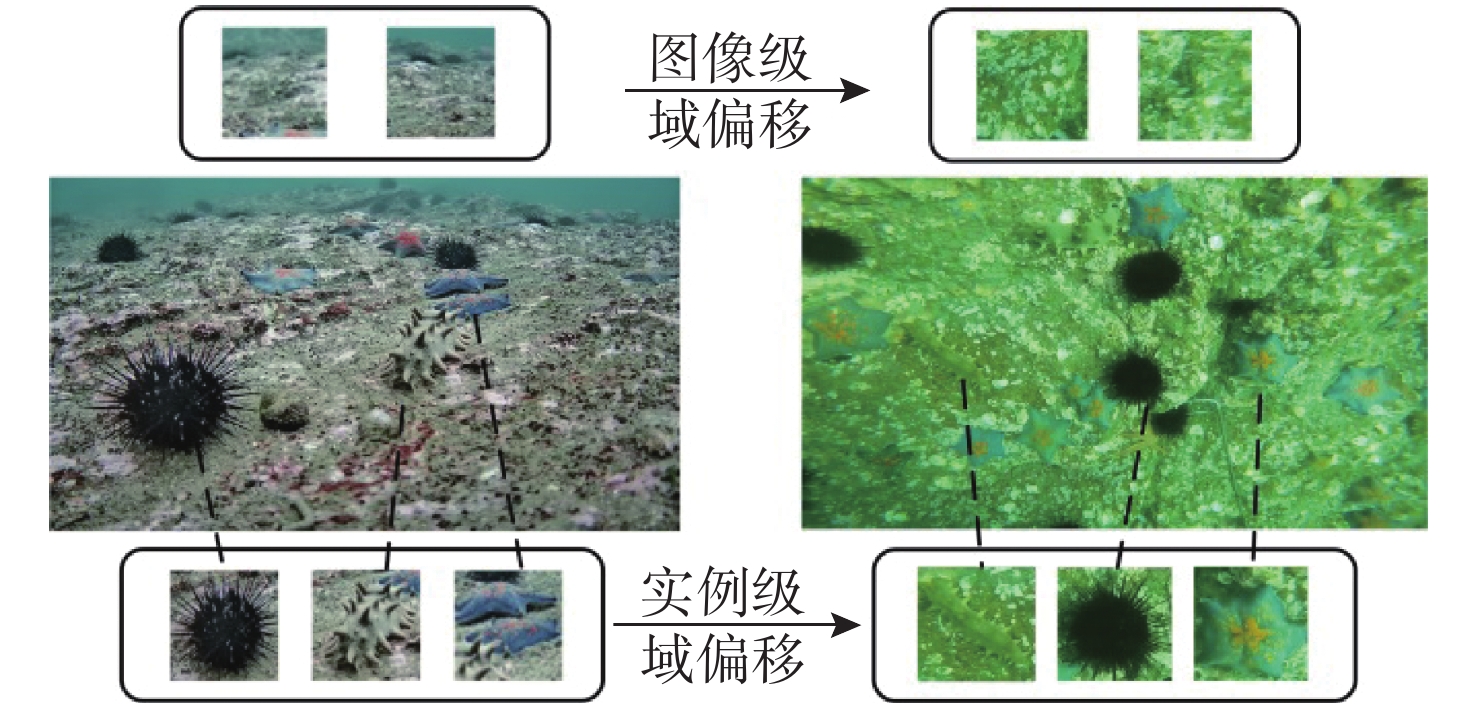

摘要: 针对水下目标检测易出现域偏移而导致检测精度下降的现象, 文中提出了基于图诱导原型对齐(GPA)的域自适应水下目标检测方法。该方法通过区域建议之间基于图的信息传播得到图像中的实例级特征, 导出每个类别的原型表示用于类别级域对齐, 从而聚合水下目标的不同模态信息, 以此实现源域和目标域的对齐, 减少域偏移带来的影响; 同时添加了卷积块注意模块(CBAM), 使神经网络能够专注于不同水域分布下的实例级特征。实验结果证明该方法能够有效提高发生域偏移时的检测精度。Abstract: Underwater target detection is often more susceptible to domain shift and reduced detection accuracy. In response to this phenomenon, this article proposed a domain-adaptive underwater target detection method based on graph-induced prototype alignment(GPA). GPA obtained instance-level features in the image through graph-based information propagation between region proposals and then derived prototype representations for category-level domain alignment. The above operations could effectively aggregate different modal information of underwater targets, thereby achieving alignment between the source and target domains and reducing the impact of domain shift. In addition, in order to make the neural network focus on instance-level features under different water domain distributions, a convolutional block attention module(CBAM) was added. The experimental results have shown that the proposed method can effectively improve detection accuracy during domain shift.

-

表 1 水下数据集和公共数据集实验结果

Table 1. Experimental result of underwater dataset and public dataset

数据集 类别 精度/% 基线 GPA DA 文中方法 URPC2020→HMRD 海参 41.7 48.1 49.2 52.0 海胆 62.3 67.9 68.1 69.7 海星 47.4 54.7 58.6 60.1 平均值 50.3 56.9 58.6 60.6 VOC12→VOC07 自行车 73.7 76.0 77.0 74.5 鸟 69.3 68.1 75.0 68.6 轿车 72.1 74.2 82.1 74.6 牛 77.2 75.3 79.3 77.1 狗 80.0 84.2 83.3 83.4 人 76.2 77.8 76.0 77.6 沙发 60.1 61.8 71.4 63.5 火车 88.3 85.9 77.6 88.5 平均值 74.6 75.4 77.7 76.0 表 2 公共数据集Sim10k→cityscapes实验结果

Table 2. Experimental result of public dataset Sim10k→cityscapes

方法 精度/% 基线 34.9 GPA 46.1 DA 45.8 文中方法 48.3 -

[1] 邱志明, 马焱, 孟祥尧, 等. 水下无人装备前沿发展趋势与关键技术分析[J]. 水下无人系统学报, 2023, 31(1): 1-9.QIU Z M, MA Y, MENG X Y, et al. Analysis on the development trend and key technologies of unmanned underwater equipment[J]. Journal of Unmanned Undersea Systems, 2023, 31(1): 1-9. [2] 郝紫霄, 王琦. 基于声呐图像的水下目标检测研究综述[J]. 水下无人系统学报, 2023, 31(2): 339-348.HAO Z X, WANG Q. Underwater target detection based on sonar image[J]. Journal of Unmanned Undersea Systems, 2023, 31(2): 339-348. [3] 孙杰, 王红萍, 张丹, 等. 不同颜色照明下的水下成像差异研究[J]. 水下无人系统学报, 2023, 31(4): 648-653.SUN J, WANG H P, ZHANG D, et al. Difference between underwater imaging with illumination sources with different colors[J]. Journal of Unmanned Undersea Systems, 2023, 31(4): 648-653. [4] LIU W, ANGUELOV D, ERHAN D, et al. SSD: Single shot multibox detector[C]//Computer Vision-ECCV 2016: 14th European Conference. Amsterdam, Netherlands: ECCV, 2016: 21-37. [5] HE K M, ZHANG X, REN S, et al. Deep residual learning for image recognition[EB/OL]. (2015-12-10)[2023-12-30]. https://arxiv.org/abs/1512.03385. [6] REDMON J, FARHADI A. YOLO9000: Better, faster, stronger[EB/OL]. (2016-12-25)[2023-12-30]. https://arxiv.org/abs/1612.08242. [7] KHODABANDEH M, VAHDAT A, RANJBAR M, et al. A robust learning approach to domain adaptive object detection[C]//IEEE/CVF International Conference on Computer Vision. Seoul, Korea: IEEE, 2019: 480-490. [8] REDMON J, DIVVALA S, GIRSHICK R, et al. You only look once: Unified, real-time object detection[C]//2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas, USA, IEEE, 2016: 779-788. [9] GIRSHICK R, DONAHUE J, DARRELL T, et al. Rich feature hierarchies for accurate object detection and semantic segmentation[C]//2014 IEEE Conference on Computer Vision and Pattern Recognition. Columbus, USA, IEEE, 2014: 580-587. [10] REN S Q, HE K M, GIRSHICK R, et al. Faster R-CNN: Towards real-time object detection with region proposal networks[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017, 39(6): 1137-1149. doi: 10.1109/TPAMI.2016.2577031 [11] LIN T Y, DOLLÁR P, GIRSHICK R, et al. Feature pyramid networks for object detection[C]//2017 IEEE Conference on Computer Vision and Pattern Recognition. Honolulu, USA: IEEE, 2017: 936-944. [12] HE K A M, GKIOXARI G, DOLLÁR P, et al. Mask R-CNN[C]//2017 IEEE International Conference on Computer Vision(ICCV). Venice, Italy: ICCV, 2017: 2980-2988. [13] 罗逸豪, 刘奇佩, 张吟, 等. 基于深度学习的水下图像目标检测综述[J]. 电子与信息学报, 2023, 45(10): 3468-3482. doi: 10.11999/JEIT221402LUO Y H, LIU Q P, ZHANG Y, et al. A review of underwater image object detection based on deep learning[J]. Journal of Electronics and Information Technology, 2023, 45(10): 3468-3482. doi: 10.11999/JEIT221402 [14] 姚文清, 李盛, 王元阳. 基于深度学习的目标检测算法综述[J]. 科技资讯, 2023, 21(16): 185-188.YAO W Q, LI S, WANG Y Y. Overview of deep learning based object detection algorithms[J]. Science and Technology Information, 2023, 21(16): 185-188. [15] SUN B, SAENKO K. Deep coral: Correlation alignment for deep domain adaptation[C]//Computer Vision-ECCV 2016 Workshops. Amsterdam, Netherlands: ECCV, 2016: 443-450. [16] GIRSHICK R. Fast R-CNN[C]//2015 IEEE International Conference on Computer Vision(ICCV). Santiago, Chile: ICCV, 2015: 1440-1448. [17] XU M H, WANG H, NI B B, et al. Cross-domain detection via graph-induced prototype alignment[C]//2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle, USA: IEEE, 2020: 12352-12361. [18] VIDIT V, ENGILBERGE M, SALZMANN M. CLIP the Gap: A single domain generalization approach for object detection[C]//2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Vancouver, Canada: IEEE, 2023: 3219-3229. [19] VIBASHAN V S, OZA P, PATEL V M. Instance relation graph guided source-free domain adaptive object detection[C]//2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Vancouver, Canada: IEEE, 2023: 3520-3530. [20] WOO S, PARK J, LEE J Y, et al. CBAM: convolutional block attention module[C]//15th European Conference on Computer Vision(ECCV). Munich, Germany: ECCV, 2018: 3-19. [21] WANG Q L, WU B G, ZHU P F, et al. ECA-Net: Efficient channel attention for deep convolutional neural net-works[C]//2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle, USA: IEEE, 2020: 11531-11539. [22] GRETTON A, BORGWARDT K M, RASCH M J, et al. A kernel two-sample test[J]. The Journal of Machine Learning Research, 2012, 13(1): 723-773. [23] TZENG E, HOFFMAN J, ZHANG N, et al. Deep domain confusion: Maximizing for domain invariance[EB/OL]. (2014-12-10)[2023-12-30].https://arxiv.org/abs/1412.3474v1. [24] SANKARANARAYANAN S, BALAJI Y, CASTILLO C D, et al. Generate to adapt: Aligning domains using generative adversarial net-works[C]//2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Salt Lake City, USA: IEEE, 2018: 8503-8512. [25] XU M H, ZHANG J, NI B B, et al. Adversarial domain adaptation with domain mixup[C]//2020 AAAI conference on Technical Track: Machine Learning. New York, USA: AAAI, 2020: 6502-6509. [26] XIE S, ZHENG Z, CHEN L, et al. Learning semantic representations for unsupervised domain adaptation[C]//International conference on machine learning. Stockholm, Sweden: ICML, 2018: 5423-5432. [27] CHEN C Q, XIE W P, HUANG W B, et al. Progressive feature alignment for unsupervised domain adaptation[C]//2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition(CVPR). Long Beach, USA: IEEE, 2019: 627-636.. [28] PAN Y W, YAO T, LI Y H, et al. Transferrable prototypical networks for unsupervised domain adaptation[C]//2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition(CVPR). Long Beach, USA: IEEE, 2019: 2239-2247. [29] HU J, SHEN L, ALBANIE G. Squeeze and excitation networks[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2020, 42(8): 2011-2023. [30] ZHANG H, ZU K, LU J, et al. EPSANet: An efficient pyramid squeeze attention block on convolutional neural network[EB/OL]. (2022-07-22)[2023-12-30]. https://arxiv.org/abs/2105.14447. [31] QIN Z Q, ZHANG P Y, WU F, et al. Fcanet: Frequency channel attention networks[C]//2021 IEEE/CVF International Conference on Computer Vision(ICCV). Montreal, Canada: IEEE, 2021: 763-772. [32] LI X, HU X l, YANG J. Spatial group-wise enhance: Improving semantic feature learning in convolutional networks[EB/OL]. (2019-05-23)[2023-12-30]. https://arxiv.org/abs/1905.09646v1. [33] LIU Z Y, WANG L M, WU W N, et al. TAM: Temporal adaptive module for video recognition[C]//2021 IEEE/CVF International Conference on Computer Vision(ICCV). Montreal, Canada: IEEE, 2021: 13688-13698. [34] LIN X W, GUO Y A, WANG J Q. Global correlation network: End-to-end joint multi-object detection and tracking[EB/OL]. (2021-04-10)[2023-12-30]. https://arxiv.org/abs/2103.12511v2. [35] SAITO K, USHIKU Y, HARADA T, et al. Strong-weak distribution alignment for adaptive object detection[C]//2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition(CVPR). Long Beach, USA: IEEE, 2019: 6949-6958. -

下载:

下载: